Why Generic AI Tools Fail for Complex Tasks in 2026

Generic AI tools are failing businesses at exactly the moment those businesses need them most. The promise was simple: plug in an AI tool, automate the repetitive work, watch productivity climb. The reality, for thousands of operations teams in 2026, is far messier.

The AI Disillusionment in Operations: Why 2026 Demands a New Approach

Generic AI tools are failing businesses at exactly the moment those businesses need them most. The promise was simple: plug in an AI tool, automate the repetitive work, watch productivity climb. The reality, for thousands of operations teams in 2026, is far messier.

Consider a common scenario:

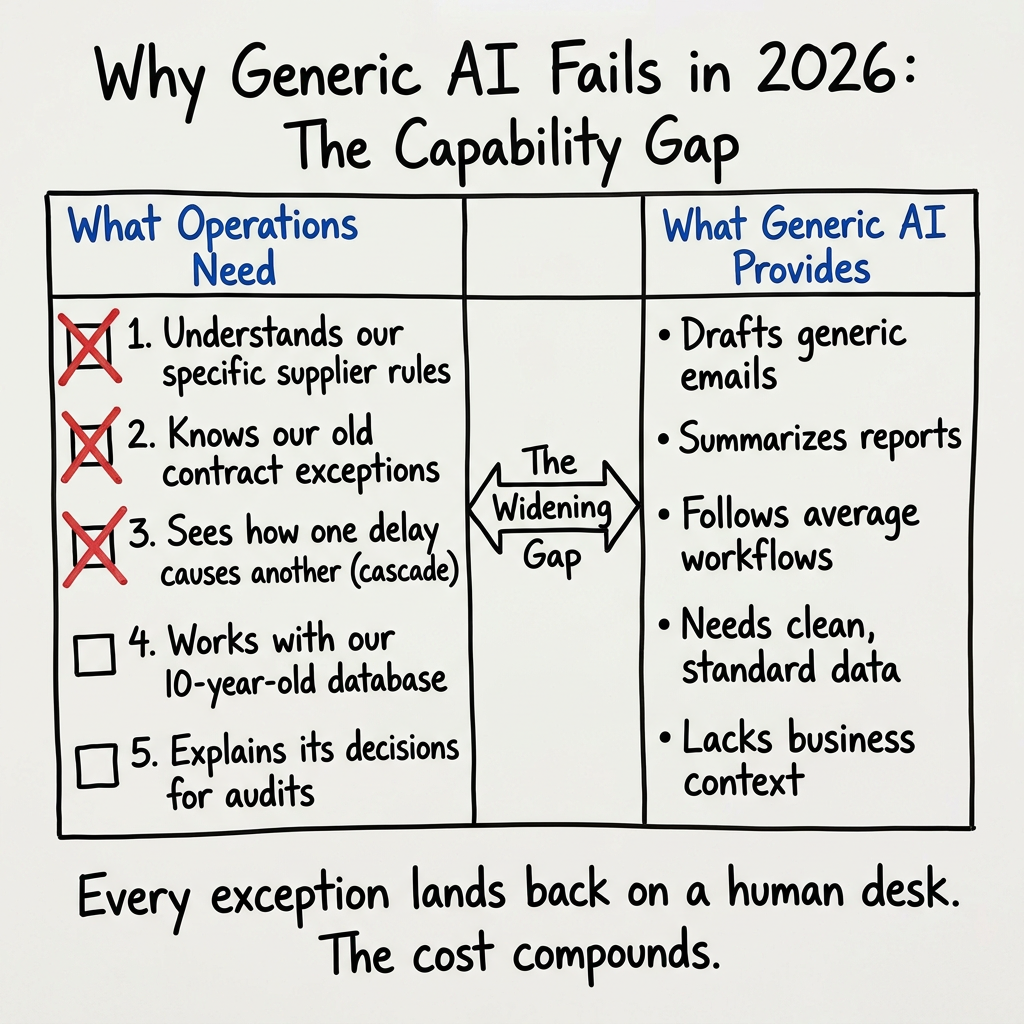

- A 150-person precision components manufacturer deploys a well-reviewed, off-the-shelf AI platform for supply chain coordination

- Within three weeks, the cracks appear — the tool drafts emails and summarises reports, but cannot interpret the firm's tiered supplier priority matrix

- It cannot account for seasonal lead-time exceptions in decade-old vendor contracts

- It cannot flag when a parts shortage will cascade into a missed delivery window for a key client

- Every exception — dozens each week — lands back on a human desk

That is not an edge case. That is the rule.

Generic AI is built for the average workflow. Most business operations are not average. They are shaped by years of institutional decisions, proprietary data structures, regulatory constraints, and competitive logic that no off-the-shelf model has ever seen.

The gap between what generic AI promises and what complex operations require is not closing — it is widening. Industry analysts suggest that as businesses push AI deeper into core workflows — procurement, compliance, client onboarding, demand forecasting — the cost of misalignment compounds across wasted hours, degraded data quality, and growing risk exposure.

The businesses pulling ahead in 2026 are not using better generic tools. They are abandoning the generic model entirely, in favour of AI built around how they actually work.

The Core Problem: Where Off-the-Shelf AI Breaks Down

Generic AI tools fail complex business tasks because they are trained for breadth, not depth. They handle the average case well. Your business is not the average case.

Complex tasks — the kind that actually drive business outcomes — share three characteristics: they involve multiple sequential decisions, they depend on proprietary data no public model has ever seen, and they contain conditional logic shaped by years of operational experience. Dynamic inventory forecasting. Custom client onboarding. Multi-tier supplier coordination. These are not simple prompts. They are processes with memory, rules, and consequences.

Three failure points appear consistently when off-the-shelf AI meets this kind of complexity.

| Failure Point | What Generic AI Does | What Complex Operations Require |

|---|---|---|

| Process Integration | Operates as a standalone tool | Reads and writes across your full software stack |

| Edge Case Handling | Defaults to a generic response or fails | Applies your specific business rules to exceptions |

| Training Data | Reflects broad internet and industry averages | Reflects your products, your clients, your logic |

The retail sector illustrates this clearly. A mid-size retailer deploys a well-rated generic chatbot to handle customer service volume. It manages simple queries — order status, store hours — without issue. Then a customer raises a return involving a manufacturer warranty, partial store credit from a previous visit, and physical damage caused during third-party delivery. The chatbot has no access to the warranty database. It cannot query the credit ledger. It has no protocol for disputed supplier liability.

Result: the agent escalates to a human. Retail operations teams running generic AI in this configuration report human escalation rates above 80% on complex service interactions — which is precisely the volume they were trying to reduce.

That is not a failure of AI. It is a failure of fit.

According to a January 2026 survey of 306 AI agent practitioners, reliability issues forced teams to abandon long-running tasks and restrict AI to simple, few-step workflows. The tools are not broken. They are just not built for what businesses actually need them to do.

What Changed in 2026: The New Realities of Business AI

Generic AI tools are not failing because the technology is immature. They are failing because the expectations placed on them have fundamentally changed. Businesses no longer want AI that answers questions. They want AI that runs operations — end to end, without a human relay race in between.

That shift is not subtle. It is structural.

Three forces converged in 2026 to make this gap impossible to ignore:

1. AI moved from feature to operational infrastructure. Businesses now expect AI to own entire workflows — not assist with individual steps. A tool that summarises a document is a feature. A system that reads a supplier contract, flags non-standard payment terms, routes it for legal review, and updates the procurement record without human input is infrastructure. Generic platforms were never designed for the latter.

2. Data sovereignty regulations closed the door on generic cloud APIs. Healthcare and financial services organisations can no longer route sensitive data through third-party AI APIs without significant compliance exposure. GDPR enforcement tightened. Sector-specific frameworks — HIPAA, SOX, FCA guidelines — became active enforcement priorities, not background considerations. Plugging patient records or loan files into a generic cloud model is, in many jurisdictions, no longer a grey area.

3. Complex tasks require multi-agent coordination, not a single model. A Salesforce study of CIOs found AI adoption skyrocketed 282% — but the growth is concentrated in multi-agent systems, where specialised agents divide complex tasks and hand off outputs between them. One agent analyses. Another cross-references. A third executes. Generic platforms cannot orchestrate this. They were built for single-turn interactions.

The healthcare sector makes the consequence concrete. A mid-size clinic deploys a generic form-filling AI to accelerate patient intake. It handles structured fields without issue. Then a patient arrives with handwritten physician referral notes, a medication history held in a legacy proprietary database, and a flagged allergy contradiction that requires cross-referencing both. The generic tool reads the form. It cannot interpret the handwriting. It cannot query the external database. It cannot identify the contradiction. Intake stalls. A clinician intervenes manually — which was precisely the bottleneck the tool was meant to remove.

That is not a technology failure. It is a scope failure. The tool was built for a simpler world. The business was not.

The organisations navigating this successfully are not buying better generic tools. They are deploying systems architected specifically around their workflows, their data environments, and their regulatory obligations — a level of specificity that off-the-shelf platforms are structurally incapable of delivering.

The 5 Hidden Costs of Using the Wrong AI Tool

The wrong AI tool does not simply underperform. It actively generates cost, risk, and operational drag — often invisibly, until the damage is already embedded in your systems and processes. These five costs are rarely captured in a software budget. They should be.

1. The Productivity Tax

Every workaround has a price. When a generic AI produces outputs that require manual correction — reformatted, re-checked, re-entered — the time saved by automation is partially or entirely consumed by remediation. Staff stop trusting the tool. They verify everything. The AI becomes a draft generator, not a decision-enabler.

2. Data Debt

Poor-quality AI outputs do not stay contained. They move downstream — into CRM records, ERP entries, procurement logs — where they compound. A misclassified customer segment corrupts a campaign. An incorrect inventory flag triggers a purchase order. Cleaning corrupted data from core systems is expensive, disruptive, and often incomplete. Prevention costs far less than remediation.

3. Strategic Blind Spots

Generic AI is trained on general data. It surfaces general insights. It cannot identify the margin pattern unique to your supplier mix, or the churn signal specific to your customer cohort. The competitive intelligence that matters most — the kind embedded in your proprietary data and operational history — remains invisible to a tool that was never built to see it.

4. Compliance Risk

| Industry | Relevant Framework | Risk if Non-Compliant |

|---|---|---|

| Financial Services | SOX, FCA Guidelines | Audit findings, regulatory penalties |

| Healthcare | HIPAA | Data breach liability, operational suspension |

| EU Operations | GDPR | Fines up to 4% of global annual turnover |

| Public Companies | SOX | Executive accountability, restatement risk |

A financial services firm discovered this at audit. Their generic document-review AI processed loan agreements at volume — but missed nuanced conditional clauses buried in non-standard contract language. Those terms were approved. The audit findings that followed were not a technology failure. They were a scope failure. The tool was not built for the complexity of the documents it was given.

5. Vendor Lock-in and Stagnation

When your operations are built around a generic platform's feature set, your competitive capability is capped by that platform's roadmap. You are not building advantage. You are renting access to a tool that thousands of competitors use identically. The moment the vendor pivots, raises prices, or discontinues a feature, your operational dependency becomes a vulnerability.

The cumulative effect of these five costs frequently exceeds the licence fee of the tool itself — often by a significant margin. The budget line looks manageable. The total cost of ownership does not.

How Bespoke AI Agents Are Built for Complexity

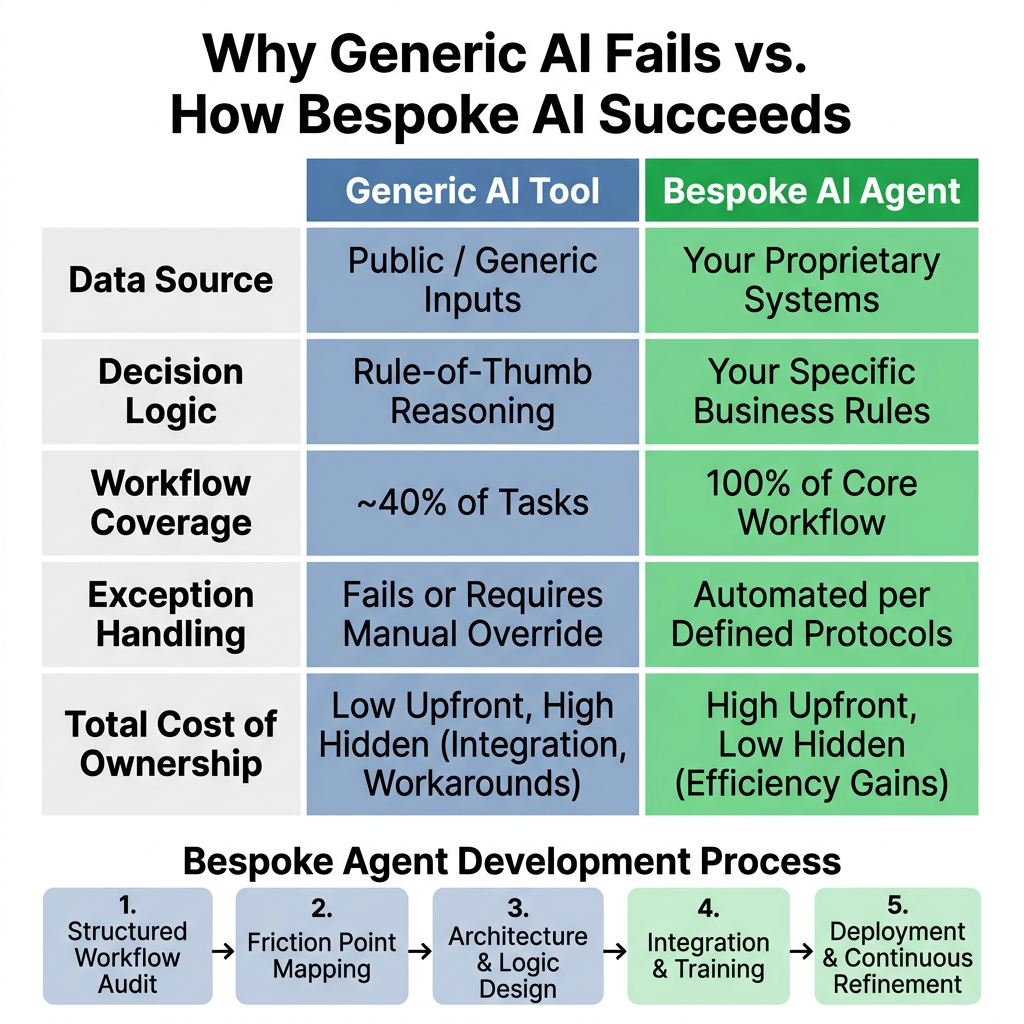

Generic AI tools are built to serve millions of users. Bespoke AI agents are built to serve one operation — yours. That distinction is not a marketing claim. It is the architectural difference between a tool that handles 40% of your workflow and a system that handles 100% of it, including the exceptions that break everything else.

Start with a counterintuitive truth: the most important part of building a bespoke AI agent is not the AI. It is the discovery work that precedes it.

Bespoke AI development begins with a structured audit of your actual workflows — not the documented versions, but the real ones. Where do your people manually intervene? Where does data move between systems without validation? Where do exceptions get handled by whoever happens to be available? Those friction points are not inefficiencies to work around. They are the design brief.

What emerges from that process is an agent architecture built on three foundations:

- Specialized knowledge — trained on your data, your terminology, and your historical decisions. Not general-purpose reasoning applied to your context, but domain-specific intelligence calibrated to your operations.

- Deep system integration — connected to your exact software stack, whether that is a legacy ERP, a proprietary CRM, or platforms no off-the-shelf tool was designed to bridge simultaneously.

- Conditional logic — the agent knows your rules. It understands that a purchase order above a certain threshold requires dual approval, or that a specific supplier's lead times require a 14-day buffer, not the industry-standard seven.

Conceptual Architecture: How a Bespoke Agent Handles Complexity

| Layer | Generic Tool | Bespoke Agent |

|---|---|---|

| Data source | Public or generic inputs | Your proprietary systems |

| Decision logic | Rule-of-thumb reasoning | Your specific business rules |

| Exception handling | Fails or escalates blindly | Structured human-in-the-loop handoff |

| System integration | API-dependent, surface-level | Embedded across your full stack |

| Learning over time | Vendor-controlled updates | Continuous improvement on your outcomes |

Then there is human-in-the-loop design — the feature most generic tools ignore entirely. A well-built agent knows its own confidence thresholds. Below a defined threshold, it surfaces the decision to a human with relevant context pre-loaded: the data it found, the options it identified, and the trade-offs attached to each. The human decides. The agent executes and learns.

This matters more than most businesses realise. A 2025 Gallup survey of over 20,000 workers found that 49% had never used AI at work, and just 12% were daily users. The gap is not enthusiasm — it is trust. Agents that escalate intelligently, rather than fail silently, are what close it.

Consider a manufacturing scenario. An agent monitors live equipment sensor data, cross-references anomaly patterns against historical maintenance logs, checks current supplier lead times, and — before a failure occurs — recommends a specific part order and technician dispatch schedule. Each step requires integration with a different system and logic built around that facility's specific protocols.

That is not automation. That is operational intelligence — and it requires specialist design, not software selection.

Common Objections to Custom AI (And Why They're Misguided)

Most objections to custom AI dissolve the moment you compare them against the actual cost of the alternative. The real risk in 2026 is not building something bespoke — it is continuing to pay for a generic tool that cannot do the job.

Here are the three objections that come up most, and why they miss the point.

| Objection | Why It Misses the Point |

|---|---|

| "It's too expensive." | This frames the comparison incorrectly. The question is never custom vs. free — it is custom vs. hidden costs already accumulating. Licence fees, manual workarounds, error correction, data cleanup, and staff hours lost compensating for a tool's limitations add up fast. A system that solves the problem costs less than one that almost solves it, indefinitely. |

| "It will take too long." | Specialist AI teams work with modular, proven components — not blank slates. As Manufacturing Today noted in early 2026, one of the most common integration mistakes is pursuing moonshot projects instead of incremental wins. A targeted agent addressing a single critical workflow is typically measured in weeks, not quarters. |

| "We lack technical expertise." | That is precisely the point of a partner. Your organisation holds the domain knowledge — the business rules, edge cases, operational context. Technical execution is someone else's responsibility. As the World Economic Forum observed, the best AI outcome in 2026 is rarely the best model — it is the best integration. |

Consider a logistics company whose generic route optimiser ignored driver union scheduling rules, failed to honour client delivery windows, and had no visibility into real-time warehouse congestion. Custom operations automation was not a preference — it was the only path to a system reflecting how their business actually ran.

The objection was cost. The reality was that the generic tool was already costing more.

The Bottom Line for SMB Leaders in 2026

The era of using generic AI for core business operations is over. Complexity, regulation, and competitive pressure have made that approach a liability, not a shortcut.

The choice has shifted. It is no longer cheap-and-generic versus expensive-and-custom. It is between a tool that quietly accumulates cost and risk, and a partner-led solution that builds real operational capability. One looks affordable on a procurement sheet. The other performs on a balance sheet.

Generic tools were built for average problems. Your business does not have average problems.

The first step is not purchasing software. It is understanding exactly where your current workflows break down — and where a tailored agent could close that gap permanently. BespokeWorks offers a complimentary Process Discovery Session to map precisely that: where generic AI fails your operations, and what a purpose-built solution would actually change.

The audit costs nothing. The status quo does.